Highlights

- AI image generation converts text into visuals using models like DALL·E and Stable Diffusion, allowing anyone to create images without drawing skills.

- A structured workflow includes prompt writing, encoding, latent processing, and image decoding, which directly impacts output quality.

- Prompt quality determines image accuracy, where descriptive inputs improve composition, lighting, and style control.

- Diffusion models dominate modern tools due to high-quality outputs, while GANs and transformers serve specialized purposes.

- Platforms such as Adobe Firefly and Canva AI make AI image generation accessible for beginners and professionals.

- Practical uses include marketing visuals, content creation, product design, and educational materials.

- Limitations include prompt misinterpretation, bias, and occasional visual inconsistencies, but rapid advancements continue to improve performance.

- Future developments focus on real-time generation, higher realism, and deeper integration into creative workflows.

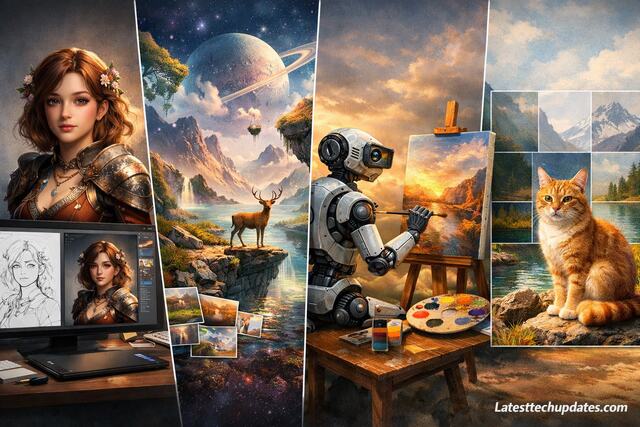

AI image generation works by transforming text descriptions into visual outputs through trained neural networks that understand patterns, styles, and structures. Modern systems such as DALL·E and Stable Diffusion rely on deep learning architectures that connect language understanding with visual synthesis. You can use these systems to create artwork, product mockups, social media visuals, or even concept designs without traditional drawing skills. The process follows a structured pipeline that includes prompt creation, model interpretation, latent space processing, and image decoding.

AI image generation has become a practical tool for designers, marketers, developers, and everyday users. I have personally used these tools to generate blog visuals, thumbnails, and creative experiments, and the learning curve becomes manageable once you understand the workflow step by step. Each stage in the pipeline plays a specific role, and mastering each stage gives you more control over the final result.

What Is AI Image Generation and How Does It Work?

AI image generation refers to the process where a machine learning model converts text or data input into visual content using trained neural networks. These models learn from massive datasets containing images and captions, which allows them to understand relationships between words and visuals.

Neural networks such as diffusion models and GANs analyze noise and gradually refine that noise into a structured image. Diffusion models like Midjourney start with random noise and progressively denoise the image based on your prompt. GAN-based systems use two competing networks to generate realistic outputs.

From my experience, understanding how the model interprets your words changes everything. When you realize that the system is predicting patterns rather than “thinking,” you start writing prompts differently and get better results consistently.

How Does Text Become an Image?

Text becomes an image through embedding and mapping processes where words are converted into numerical representations. Those representations guide the model in shaping visual elements like objects, lighting, and composition.

What Role Does Training Data Play?

Training data provides the foundational knowledge that enables the model to recognize objects, styles, and artistic patterns. High-quality datasets result in more accurate and visually appealing outputs.

What Are the Main Types of AI Image Generation Models?

AI image generation uses several types of models, each with different strengths and use cases. The most common ones include diffusion models, GANs, and transformer-based architectures.

Diffusion models dominate the current landscape because they produce highly detailed and coherent images. GANs remain useful for specific tasks like face generation or style transfer. Transformer-based systems integrate language understanding more deeply into the image creation process.

In practical use, I noticed diffusion models give more control over style and composition, while GANs feel more limited but faster in certain cases. Choosing the right model depends on your goal.

| Model Type | Strengths | Weaknesses |

| Diffusion Models | High quality, flexible control | Slower generation |

| GANs | Fast, realistic outputs | Limited diversity |

| Transformers | Strong language understanding | Computationally expensive |

What Are Diffusion Models?

Diffusion models generate images by gradually removing noise from a random starting point, guided by the prompt and learned patterns.

What Are GANs?

GANs consist of a generator and discriminator working together, where one creates images and the other evaluates them for realism.

How Do You Write Effective Prompts for AI Image Generation?

Prompt writing directly influences the output quality. A prompt acts as instructions that guide the AI toward producing a specific image. Clear, descriptive, and structured prompts lead to better results.

A strong prompt includes subject, style, lighting, composition, and mood. For example, instead of writing “a cat,” writing “a realistic orange cat sitting on a wooden table with soft morning light” produces a much better result.

From my own usage, I learned that small wording changes can completely alter the output. Adding details like camera angle or artistic style often transforms an average image into something impressive.

| Prompt Element | Example | Impact |

| Subject | A futuristic city | Defines main focus |

| Style | Cyberpunk illustration | Sets artistic direction |

| Lighting | Neon lighting at night | Adds atmosphere |

| Composition | Wide-angle shot | Controls perspective |

What Makes a Prompt Powerful?

A powerful prompt combines clarity with detail, allowing the model to interpret the scene accurately without confusion.

What Should You Avoid in Prompts?

Avoid vague or conflicting descriptions because ambiguity often leads to inconsistent or low-quality outputs.

What Are the Steps in AI Image Generation Workflow?

AI image generation follows a structured workflow that begins with input and ends with a refined output. Each step contributes to the final quality of the image.

The process starts with writing a prompt, followed by encoding that prompt into vectors. The model then processes these vectors in latent space and generates an image through iterative refinement.

From my experience, breaking the workflow into steps helps in troubleshooting. When an image looks wrong, you can identify whether the issue comes from the prompt, model, or settings.

Input and Encoding

The system converts text into numerical embeddings that represent meaning and relationships.

Image Synthesis and Decoding

The model generates an image from noise and decodes it into a visible format based on learned patterns.

What Tools and Platforms Are Used for AI Image Generation?

Various platforms provide access to AI image generation, each offering unique features and controls. Popular tools include Canva AI and Adobe Firefly.

Each platform differs in terms of ease of use, customization, and output quality. Some tools focus on beginners with simple interfaces, while others provide advanced controls for professionals.

I personally started with simpler tools before moving to more advanced platforms. That transition made a huge difference in understanding how parameters like guidance scale and sampling affect results.

Which Tool Is Best for Beginners?

Beginner-friendly tools offer simple interfaces and preset styles that make it easy to generate images quickly.

Which Tool Is Best for Professionals?

Professional tools provide advanced customization, allowing precise control over image generation parameters.

What Are the Practical Applications of AI Image Generation?

AI image generation has practical uses across industries, including marketing, entertainment, education, and product design. Businesses use generated visuals for advertisements, social media, and branding.

Content creators use AI to generate thumbnails, illustrations, and concept art. Developers integrate AI-generated visuals into apps and games. Educators use these tools to create visual learning materials.

From my experience, one of the biggest advantages is speed. Tasks that once took hours or days can now be completed in minutes, which makes AI image generation highly valuable for productivity.

How Is AI Used in Marketing?

Marketing teams use AI-generated visuals for campaigns, ads, and social media content to increase engagement.

How Is AI Used in Design?

Designers use AI for rapid prototyping, concept visualization, and creative experimentation.

What Are the Limitations and Future of AI Image Generation?

AI image generation still has limitations, including inaccuracies, bias in outputs, and lack of true understanding. Models may produce distorted images or misinterpret prompts.

Ethical concerns also arise, such as copyright issues and misuse of generated content. Developers continue to improve models to address these challenges.

Looking ahead, AI image generation will become more precise, faster, and integrated into everyday tools. Based on my experience, improvements are happening rapidly, and each new version feels significantly more capable than the last.

What Are Current Limitations?

Current limitations include prompt misinterpretation, inconsistent details, and dependency on training data quality.

What Does the Future Look Like?

Future developments will focus on better realism, real-time generation, and deeper integration with creative workflows.

Conclusion

AI image generation transforms text into visuals through structured processes involving neural networks, prompt engineering, and iterative refinement. Understanding how models work, how prompts influence results, and how workflows operate gives you complete control over output quality. Personal experimentation reveals that consistent practice leads to better results, and mastering these tools opens opportunities in creativity, productivity, and innovation. Future advancements will continue to improve accuracy and accessibility, making AI image generation an essential skill in digital creation.

FAQ’s

What is AI image generation in simple terms?

AI image generation creates images from text descriptions using machine learning models trained on visual data.

Do I need technical skills to use AI image generators?

Basic tools require no coding skills, while advanced tools may involve technical understanding for better control.

Which AI tool is best for image generation?

Popular tools include DALL·E, Midjourney, and Stable Diffusion, each offering unique features.

Can AI replace human designers?

AI supports designers by speeding up workflows but does not replace human creativity and decision-making.

How can I improve my AI-generated images?

Improving prompts, experimenting with styles, and adjusting parameters significantly enhance output quality.